Why I use Dev containers for most of my projects

Written on

It is important to have a consistent and streamlined setup process for your development environment. This saves time and minimizes frustration, both making you more productive and also making it much easier to onboard new developers. This is important, whether we’re talking about a company who wants to onboard new engineers or an open source project that needs more contributors, being able to press one button to get a fully functional development environment is incredibly valuable.

That’s why I was thrilled when I discovered dev containers! Dev containers use containers to automate the setup of your development environment. You can have it install compilers, tools, and more. Set up a specific version of nodejs, install AWS cli, or run a bash script to run a code generator. Anything you need to set up your development environment. And you can also run services like databases or message queues along with your development environment because it has support for Docker compose.

For example, I use dev containers to spin up Redis and CouchDB instances for a project I’m working on. It also installs pnpm, then uses it to install all the dependencies for the codebase. The end result is that you can press one button to have a fully functional development environment in under a minute.

This has many advantages. It ensures that everyone has the same version of any services or tools needed, and isolates these tools from the base system. And if you ship your code with containers, it also makes your development environment very similar to your production environment. No more “well it works on my machine” issues!

Basic setup

I use dev containers with VSCode. It has pretty good support. I’ve also tried the dev container CLI which works fine if you just want to keep everything in the CLI (although you could probably stick with docker compose alone then!).

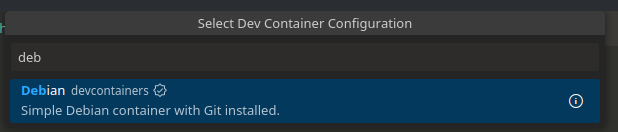

VSCode comes with commands to automatically generate a dev container configuration for you by answering a few questions.

At the core of dev containers, what sets it apart from just using Docker is the

“features”. These are pre-made recipes that install some tool or set up some

dependency within your dev container. There is a lot of these available,

installing everything from pnpm to wget. You can also set up commands to run

when the container is created —or even every time the container is started— to

install or set up anything else that features didn’t cover.

{

// ...

"features": {

"ghcr.io/devcontainers/features/node:1": {},

"ghcr.io/devcontainers-contrib/features/pnpm:2": {}

},

"updateContentCommand": "pnpm install"

// ...

}Above is an excerpt from the dev container of a project I’m working on. I needed nodejs and pnpm, and I then use pnpm to install the dependencies.

Docker compose

But I honestly probably would not have used dev containers if this was all they did. What I find even more impressive is that they can be set up to use docker compose to bring up other services like I mentioned at the beginning.

To do that, you create your docker compose file with all the services you need, but also add in the dev container.

version: '3.8'

services:

devcontainer:

image: mcr.microsoft.com/devcontainers/base:bullseye

volumes:

- ..:/workspaces/my-project:cached

command: sleep infinity

environment:

- COUCHDB=http://test:test@couchdb:5984

- S3=http://minio:9000

couchdb:

restart: unless-stopped

image: couchdb:3.3

volumes:

- couchdb-data:/opt/couchdb/data

environment:

- COUCHDB_USER=test

- COUCHDB_PASSWORD=test

minio:

restart: unless-stopped

image: minio/minio

volumes:

- minio-data:/data

command: server /data --console-address ":9001"

volumes:

couchdb-data:

minio-data:In the example above, I’m setting up a CouchDB database and Minio S3-compatible store. Docker gives containers access to each other using the container names. I pass the endpoint URLs as environment variables to my dev container, where I can read and use them.

Then, you just tell your dev container config to use the docker compose file.

{

"name": "my-project",

"dockerComposeFile": "docker-compose.yml",

"service": "devcontainer",

"workspaceFolder": "/workspaces/my-project",

// Adding the Rust compiler, plus the AWS cli so I can access the S3 API of minio from the CLI.

"features": {

"ghcr.io/devcontainers/features/rust:1": {},

"ghcr.io/devcontainers/features/aws-cli:1": {}

},

// The project I'm working on exposes the port 8080.

// I forward that out so I can look at it on my browser.

"forwardPorts": [8080],

// Set up the development AWS config and credentials with test values,

"onCreateCommand": "mkdir -p ~/.aws/ && /bin/echo -e '[default]\nregion = local' > ~/.aws/config && /bin/echo -e '[default]\naws_access_key_id = minioadmin\naws_secret_access_key = minioadmin' > ~/.aws/credentials",

// Create the S3 bucket

"postCreateCommand": "aws s3 --endpoint-url $S3_ENDPOINT mb s3://my-bucket",

// I found that I have to add this, but it's not the default. Not sure why.

"remoteUser": "root",

"customizations": {

// You can even add in VSCode extensions that everyone working on the project

// would need, without them having to install it on their own setup manually.

"vscode": {

"extensions": ["rust-lang.rust-analyzer", "streetsidesoftware.code-spell-checker"]

}

}

}That’s it! Run the “Dev Containers: Reopen in Container” command in VSCode, give it a few minutes, and you’ll have your full development environment ready.